Two Tools, Two Philosophies

The AI agent ecosystem is converging on a fundamental split: agents need to know things and they need to do things. Andrew Ng's Context Hub and OpenClaw's ClawHub each address one side of that divide — and understanding the distinction is key to building agents that actually work.

Context Hub: The Reference Layer

Context Hub is a curated documentation layer. It serves agents with accurate, versioned API signatures spanning 605 libraries and counting. When a coding agent needs to call a Stripe endpoint or compose a Twilio message, Context Hub provides the exact method signatures, parameter types, and return values — eliminating the guesswork that leads to hallucinated API calls.

What makes it more than a static docs mirror is its annotation system. Agents can learn from prior interactions, building up a layer of practical notes on top of the canonical documentation. Think of it as a well-organized reference library where every book also contains margin notes from previous readers.

ClawHub: The Execution Layer

ClawHub takes a fundamentally different approach. Rather than describing what an API looks like, it prescribes how to accomplish a task end-to-end. Each skill in ClawHub is a complete playbook — step-by-step instructions, helper scripts, configuration templates, and error-handling logic bundled together.

Where Context Hub answers "What parameters does this function accept?", ClawHub answers "Here is the full workflow to set up Stripe webhook monitoring, including the scripts and config files you need."

The Core Distinction

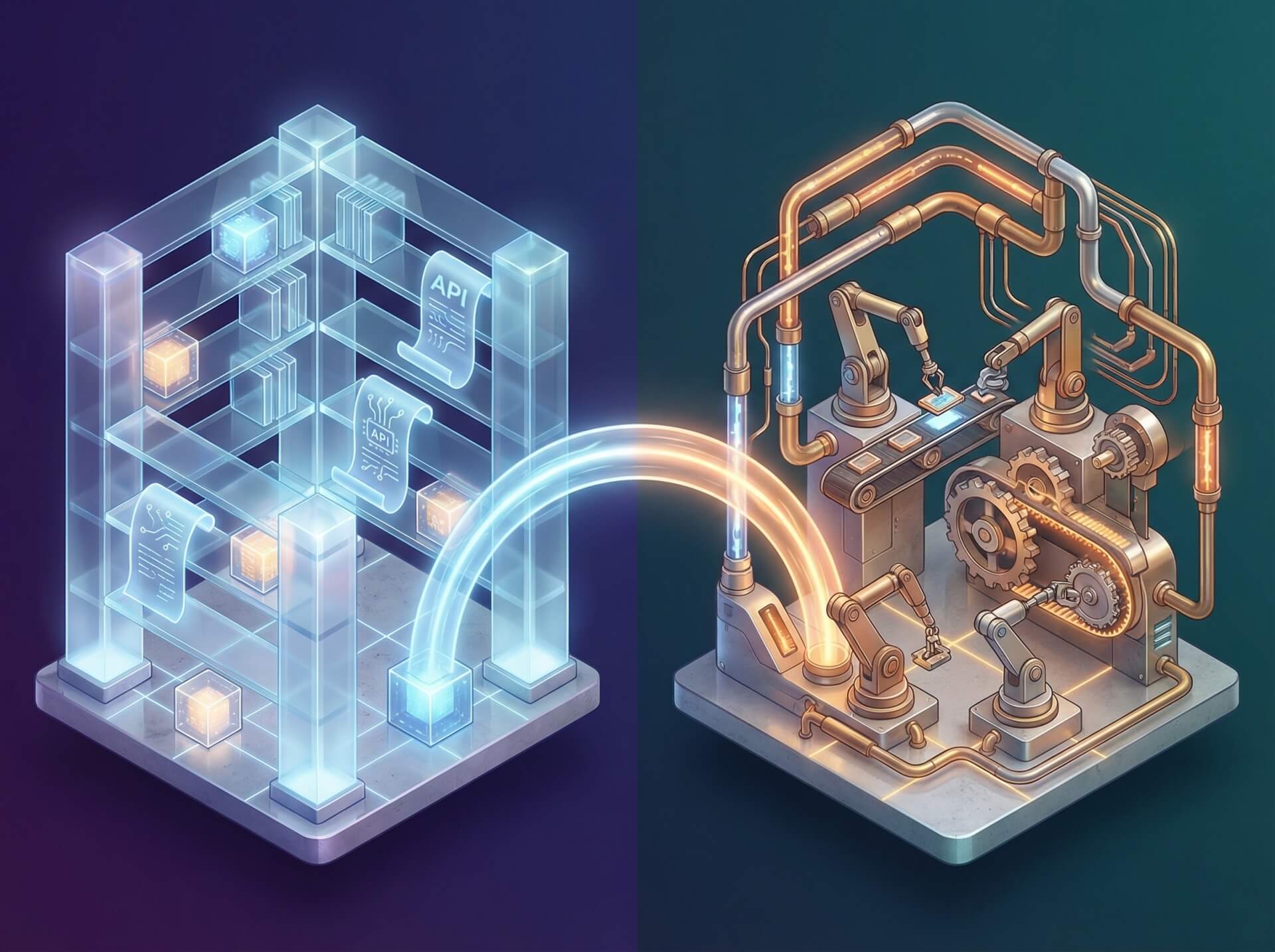

The simplest way to frame this:

Context Hub is a library. ClawHub is a workshop.

A library gives you accurate reference material so you can build anything. A workshop gives you jigs, templates, and proven procedures so you can build specific things fast. Neither replaces the other.

Where They Complement Each Other

Consider a practical scenario: setting up Stripe payment monitoring.

- Context Hub alone — the agent gets precise Stripe API documentation and can reason about endpoints, but it must independently design the monitoring architecture, write the glue code, and handle edge cases from scratch.

- ClawHub alone — the agent receives a battle-tested monitoring playbook that works out of the box, but if the use case deviates from the template (say, a custom webhook filter), the agent lacks the detailed API reference to extend the skill confidently.

- Both together — the agent executes ClawHub's proven workflow for the standard path, then pulls Context Hub documentation whenever it needs to adapt, extend, or troubleshoot. The playbook provides speed; the documentation provides flexibility.

This is the pattern that scales: pre-built skills for the common path, precise documentation for the edges.

Practical Setup

Stacking both tools is straightforward. Context Hub installs via npm and integrates as a documentation provider, while ClawHub skills slot in as task executors. For teams running on a managed platform, both can coexist alongside existing agent configurations with minimal overhead — Context Hub reduces API errors at the reference level, and ClawHub accelerates task completion at the execution level.

The Takeaway

The question is not Context Hub or ClawHub. Agents that only have documentation write correct code slowly. Agents that only have playbooks break when requirements shift. The combination — accurate references layered under executable skills — is what produces agents that are both fast and resilient.

Build the library. Stock the workshop. Your agents need both.